There is a specific flavor of nausea reserved for serverless engineering teams. It usually strikes at 2 a.m., shortly after a major product launch, when someone posts a triumphant screenshot of user traffic in Slack. While the marketing team is virtually high-fiving, CloudWatch quietly begins to draw a perfect, vertical line that looks less like a growth chart and more like a cliff edge.

Your SQS queues swell. Lambda invocations crawl. Suddenly, the phrase “fully managed service” sounds less comforting and more like a cruel punchline delivered by a distant cloud provider.

For years, the relationship between Amazon SQS and AWS Lambda has been the backbone of event-driven architecture. You wire up an event source mapping, let Lambda poll the queue, and trust the system to scale as messages arrive. Most days, this works beautifully. On the wrong day, under the wrong kind of spike, it works “eventually.”

But in the world of high-frequency trading or flash sales, “eventually” is just a polite synonym for “too late.”

With the release of AWS Lambda SQS Provisioned Mode on November 14, Amazon is finally admitting that sometimes magic is too slow. It grants you explicit control over the invisible workers that poll SQS for your function. It ensures they are already awake, caffeinated, and standing in line before the mob shows up. It allows you to trade a bit of extra planning (and money) for the guarantee that your system won’t hit the snooze button while your backlog turns into a towering monument to failure.

The uncomfortable truth about standard SQS polling

To understand why we need Provisioned Mode, we have to look at the somewhat lazy nature of the standard behavior.

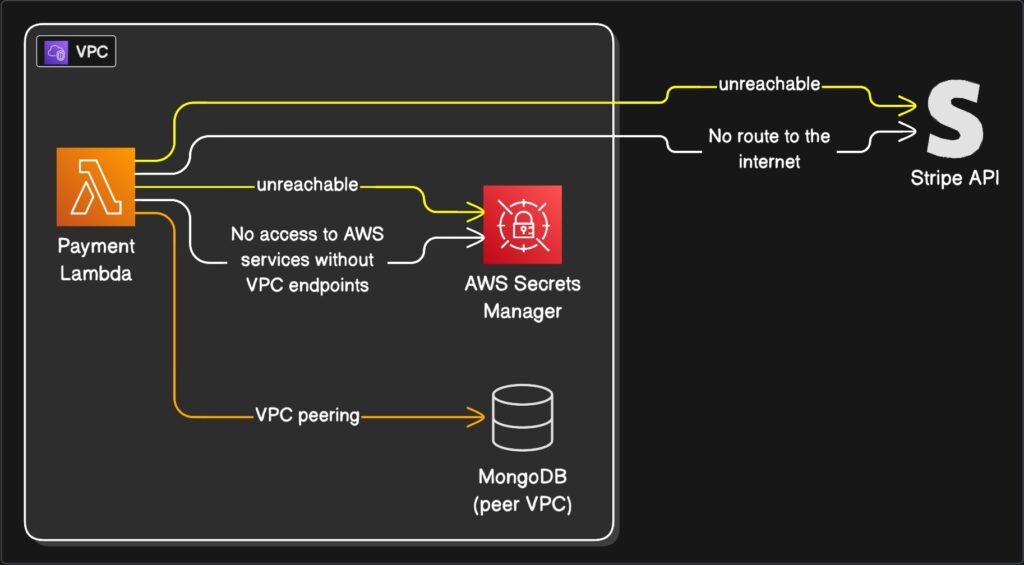

Out of the box, Lambda uses an event source mapping to poll SQS on your behalf. You give it a queue and some basic configuration, and Lambda spins up pollers to check for work. You never see these pollers. They are the ghosts in the machine.

The problem with ghosts is that they are not particularly urgent. When a massive spike hits your queue, Lambda realizes it needs more pollers and more concurrent function invocations. However, it does not do this instantly. It ramps up. It adds capacity in increments, like a cautious driver merging onto a freeway.

For a steady workload, you will never notice this ramp-up. But during a viral marketing campaign or a market crash, those minutes of warming up feel like an eternity. You are essentially watching a barista who refuses to start grinding coffee beans until the line of customers has already curled around the block.

Standard SQS polling gives you tools like batch size, but it denies you direct influence over the urgency of the consumption. You cannot tell the system, “I need ten workers ready right now.” You can only stand in line and hope the algorithm notices you are drowning.

This is acceptable for background jobs like resizing images or sending emails. It is decidedly less acceptable for payment processing or fraud detection. In those cases, watching twenty thousand messages pile up while your system “automatically scales” is not an architectural feature. It is a resume-generating event.

Paying for a standing army instead of volunteers

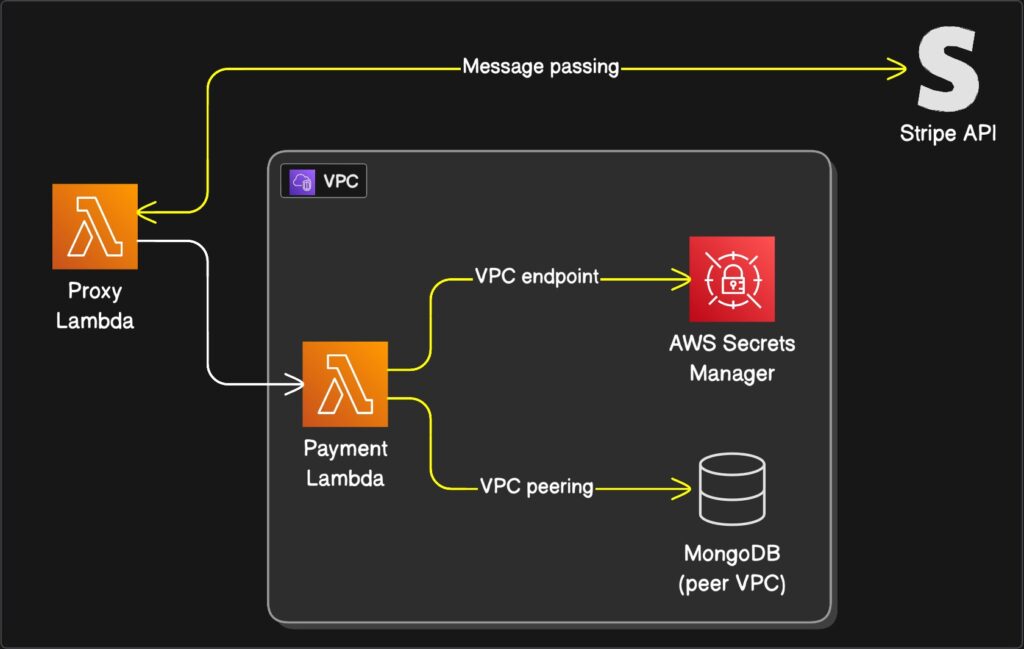

Provisioned Mode flips the script on this reactive behavior. Instead of letting Lambda decide how many pollers to use based purely on demand, you tell it the minimum and maximum number of event pollers you want reserved for that queue.

An event poller is a dedicated worker that reads from SQS and hands batches of messages to your function. In standard mode, these pollers are summoned from a shared pool when needed. In Provisioned Mode, you are paying to keep them on retainer.

Think of it as the difference between calling a ride-share service and hiring a private driver to sit in your driveway with the engine running. One is efficient for the general public; the other is necessary if you need to leave the house in exactly three seconds.

The benefits are stark when translated into human terms.

First, you get speed. AWS advertises significantly faster scaling for SQS event source mappings in Provisioned Mode. We are talking about adding up to one thousand new concurrent invocations per minute.

Second, you get capacity. Provisioned Mode can support massive concurrency per SQS mapping, far higher than the default capabilities.

Third, and perhaps most importantly, you get predictability. A single poller is not just a warm body. It is a unit of throughput (handling up to 1 MB per second or 10 concurrent invokes). By setting a minimum number of pollers, you are mathematically guaranteeing a baseline of throughput. You are no longer hoping the waiters show up; you have paid their salaries in advance.

Configuring this without losing your mind

The good news is that Provisioned Mode is not a new service with its own terrifying learning curve. It is just a configuration toggle on the event source mapping you are already using. You can set it up in the AWS Console, the CLI, or your Infrastructure as Code tool of choice.

The interface asks for two numbers, and this is where the engineering art form comes in.

First, it asks for Minimum Pollers. This is the number of workers you always want ready.

Second, it asks for Maximum Pollers. This is the ceiling, the limit you set to ensure you do not accidentally DDoS your own database.

Choosing these numbers feels a bit like gambling, but there is a logic to it. For the minimum, pick a number that comfortably handles your typical traffic plus a standard spike. Start small. Setting this to 100 when you usually need 2 is the serverless equivalent of buying a school bus to commute to work alone.

For the maximum, look at your downstream systems. There is no point in setting a maximum that allows 5,000 concurrent Lambda functions if your relational database curls into a fetal position at 500 connections.

Once you enable it, you need to watch your metrics. Keep an eye on “Queue Depth” and “Age of Oldest Message.” If the backlog clears too slowly, buy more pollers. If your database administrator starts sending you angry emails in all caps, reduce the maximum. The goal is not perfection on day one; it is to replace guesswork with a feedback loop.

The financial hangover

Nothing in life is free, and this applies doubly to AWS features that solve headaches.

When you enable Provisioned Mode, AWS begins charging you for “Event Poller Units.” You pay for the minimum pollers you configure, regardless of whether there are messages in the queue. You are paying for readiness.

This is a mental shift for serverless purists. The whole promise of serverless was “pay for what you use.” Provisioned Mode is “pay for what you might need.”

You are essentially renting a standing army. Most of the time, they will just stand there, playing cards and eating your budget. But when the enemy (traffic) attacks, they are already in position. Standard SQS polling is cheaper because it relies on volunteers. Volunteers are free, but they take a while to put on their boots.

From a FinOps perspective, or simply from the perspective of explaining the bill to your boss, the question is not “Is this expensive?” The question is “What is the cost of latency?”

For a background report generator, a five-minute delay costs nothing. For a high-frequency trading platform, a five-second delay costs everything. You should not enable Provisioned Mode on every queue in your account. That would be financial malpractice. You reserve it for the critical paths, the workflows where the price of slowness is measured in lost customers rather than just infrastructure dollars.

Why you should care about the fourth dial

Architecturally, Provisioned Mode gives us a new layer of control. Previously, we had three main dials in event-driven systems: how fast we write to the queue, how fast the consumers process messages, and how much concurrency Lambda is allowed.

Provisioned Mode adds a fourth dial: the aggression of the retrieval.

It allows you to reason about your system deterministically. If you know that one poller provides X amount of throughput, you can stack them to meet a specific Service Level Agreement. It turns a “best effort” system into a “calculated guarantee” system.

Serverless was sold to us as freedom from capacity planning. We were told we could just write code and let the cloud handle the undignified details of scaling. For many workloads, that promise holds true.

But as your workloads become more critical, you discover the uncomfortable corners where “just let it scale” is not enough. Latency budgets shrink. Compliance rules tighten. Customers grow less patient.

AWS Lambda SQS Provisioned Mode is a small, targeted answer to that discomfort. It allows you to say, “I want at least this much readiness,” and have the platform respect that wish, even when your traffic behaves like a toddler on a sugar high.

So, pick your most critical queue. The one that keeps you awake at night. Enable Provisioned Mode, set a modest minimum, and watch the metrics. Your future self, staring at a flat latency graph during the next Black Friday, will be grateful you decided to stop trusting in magic and started paying for physics.